Tag: ethics

What Counts as AI‑Generated?

Posted by bsstahl on 2026-03-28 and Filed Under: tools

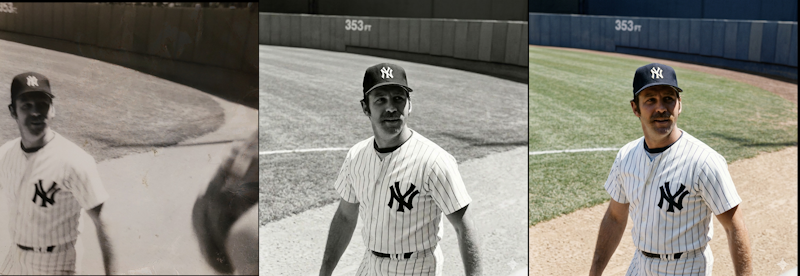

I still have the first camera I ever used - a 126 box camera, similar to a Hawekeye II, that was basically a toy even in its own era. I shot with black‑and‑white film because that's what a kid could afford, and it produced the kind of photos you'd expect from a plastic lens and a shutter that felt like it was powered by hope. One of those photos captured Thurman Munson, the Yankees catcher who would later die in a plane crash, making him something of a larger-than-life figure in my experience. It's not a great photo. It's grainy, off‑center, and full of the accidental foreground clutter you get when you're small, excited, and holding a camera that doesn't care about your artistic intent.

Recently, I ended up with three versions of that same moment:

- The original - a scan of the actual frame I shot as a kid.

- A cleaned‑up version - run through an AI tool that removed some shadows, centered Munson, and erased the stray arms of the people next to me.

- A colorized version - also AI‑assisted, adding color to a scene that never existed in color on film.

All three images are real in the sense that they correspond to something that actually happened, and all three are altered in the sense that every photograph is shaped by the tools available at the time. When I show any version of these images, I could be asked, Is it "AI‑generated"?

Unfortunately, that question really can't be answered without a lot more context. All 3 images used AI as part of the pipeline in some form or another, because depending on how you define AI, even the act of scanning the original likely used a model. The question we really need to answer is: what do we mean when we say something is "AI‑generated"?

The cleaned‑up version of this photo didn't invent anything. It didn't fabricate Munson's face or change the moment. It just did what darkroom techniques, Photoshop, and restoration tools have always done. The colorized version added something new, but colorization has existed for more than a century. The only difference is that a machine did the brushwork instead of a human. What about the original? It's still the moment I captured as a kid with a box camera. The digital version may have passed through modern software on its way to the screen, but the instant in time remains intact.

Even "true" photos can mislead, with or without AI

This is where things get tricky. Any still or moving image can create false impressions with the viewer. Strange lighting, unusual shadows, a frozen instant in time that doesn't really capture the essence of the situation. All of these things happen, and we've experienced them. How many times have you taken a photo of someone who was happy, but looked sad or angry in the shot? Was the dress blue or gold?

In my three images above, the event happened nearly entirely as presented in those photos. Despite that, any of these versions can still create false impressions in the mind of the viewer.

For example:

- It is possible that Munson is talking to someone, or perhaps yelling at them in a way not captured by this frame.

- When I took the picture, there may have been one or more other people just outside the frame, changing the context.

- The cleaned‑up version might imply the scene was less crowded than it really was, because the tool removed the arms of the people next to me.

- The colorized version might imply the grass at Yankee Stadium looked a certain way that day, when the original didn't capture that detail.

- The colorization might suggest Munson wore an undershirt of a particular shade, a detail the model had to invent.

None of these facts are necessarily germane to the image, but they absolutely can alter its interpretation. Still images can present scenes in a framing that doesn't completely do it justice, while AI can introduce confident, plausible details that were never in evidence, whether done maliciously or not.

This is why labeling matters. Not because AI involvement is inherently bad, but because, in most cases, viewers deserve to know which parts of an image are grounded in reality and which parts were reconstructed, inferred, or imagined. However, defining those rules is an area where a poor definition could let some people get away with anything while the rest of us end up having to tag everything as AI generated, turning the label into just more noise.

This isn't even touching the copyright issues

Everything above is about truth: what happened, what didn't, and what an image implies, but there's a whole separate dimension we haven't entered: copyright.

Questions like:

- What training data was used to create the model?

- Who owns the derivative works?

- When does enhancement become transformation?

- What rights do I retain over my own childhood photo once an AI model has touched it?

These aren't footnotes. They're large, unresolved questions that deserve their own analysis and probably their own regulatory framework. Mixing them into the "AI‑generated vs. not" debate only makes everything muddier. So for this post, I'm deliberately setting copyright aside; not because it's unimportant, but because it's too important to treat as a parenthetical.

The Hard Part Is Defining What Matters

The reasons why blanket rules about "AI‑generated content" fall apart are complicated. The line between "generated," "assisted," "enhanced," and "restored" isn't a line at all, it's a gradient. That doesn't mean we shouldn't regulate AI‑involved media. It means we need to regulate AI with language and intent that actually matches reality, and solves the real problems.

There are cases where labeling is essential, but most of it is context specific. If I am posting a picture of a conference talk I gave, I wouldn't feel right adding fake participants in the crowd, but I'd often be fine with editing someone out who asked me to, depending on the reason for doing so. I might not feel the same way if the photograph was being published as part of a story in the news. However, there are some things that should probably always be disclosed:

- Images of things that never happened should be labeled as such.

- Images containing people that don't exist must be disclosed.

- Images where people or evidence is added absolutely require clear disclosure, even if they are believed to be 'real'.

- AI‑assisted reconstructions, such as those built from text descriptions after the fact, should be labeled in way that allows viewers understand what's real and what's assumed.

Those distinctions matter because they speak to truth, provenance, and the potential for harm, and they remain just as important whether AI is part of the process or not.

But my three images of Thurman Munson? They're all the same moment, they differ only in the tools used to reveal it. In most contexts, there is no meaningful change made by these manipulations.

There are already existing sets of rules we can lean on here. The National Press Photographers Association has a Code of Ethics for visual journalists that includes the following:

Editing should maintain the integrity of the photographic image's content and context. Do not manipulate images or add or alter sound in any way that can mislead viewers or misrepresent subjects.

I would ask you, "Does my manipulation of this image mislead viewers or misrepresent subjects?"

This Code of Ethics also includes composition and subject matter rules such as:

- Resist being manipulated by staged photo opportunities

- Be complete and provide context when photographing or recording subjects

- While photographing subjects, do not intentionally contribute to, alter, or seek to alter or influence events

- Do not pay sources or subjects or reward them materially for information or participation

- Do not accept gifts, favors, or compensation from those who might seek to influence coverage

All of which suggests that the editing of images, the part that can be done using AI, is just a small part of the harm that can be done through visual means, albeit one that scales better than most.

Here's the part we can't ignore

AI, in some form, is nearly always involved now. Not the headline‑grabbing generative models that synthesize faces or fabricate events, but the quiet, invisible systems inside scanners, cameras, phones, and photo apps, the ones nobody notices because they don't feel like AI. Processes like sharpening, noise reduction, auto‑contrast, white‑balance correction, lens‑distortion fixes and de‑mosaicing filters are all part of many of the image capture mechanisms we use every day. Other domains have similar tools used for autocorrect, predictive-text, grammar correction, spellcheck, voice-to-text, spam filtering and recommendations. These are all machine‑learning (ML) systems doing work behind the scenes.

So the question can't be "Was AI used?" The questions must be more akin to "What kind of AI was used, how was it used, and to what effect?". These questions need to be answered in the full context of the situation, because the truth of this photo is simple, AI didn't create it, it actually happened. The tools just helped me see it more clearly, but they can also help someone else see something that was never there. Outside of this one childhood snapshot, it's rarely even that simple.

Knowing the difficulty in categorizing these three versions of a childhood photo as 'AI-generated' or not, it is obvious that we can't build policy around such a binary definition. We need rules that focus on intent, impact, and what claims are being made, not on whether a model was somewhere in the toolchain. We will drill into more detail on how we can craft regulations that take these items into account in future posts.

Tags: ai ethics legislation ml opinion

Preserve Section 230 to Protect Free Speech and Competition

Posted by bsstahl on 2025-03-26 and Filed Under: general

An open letter to Senators Kelly and Gallego urging them to oppose any weakening of the protections found in Section 230 of the Communications Decency Act (CDA) of 1996.

Dear Senator,

I am reaching out to express my strong opposition to any modifications or repeal of Section 230 of the Communications Decency Act.

I am a constituent and a professional with 40 years of experience in distributed systems development, including my work on some of the earliest Internet-based applications at Intel Corporation in Chandler.

Section 230 is a foundational element of the Internet's legal framework and altering it could have profound negative impacts on both free speech and competition in the Internet services space. Here are my primary concerns:

Impact on Free Speech

Section 230 provides a crucial liability shield that enables platforms to host diverse content without fear of constant litigation. Repealing or modifying this section would lead to increased censorship as platforms become overly cautious in moderating content. This could stifle free expression and create a chilling effect, where administrators are forced to censor, or shut down operations altogether, out of fear that perfectly legal speech might lead to liabilities for the platform. The open dialogue and exchange of ideas that are core to our democratic principles would be severely compromised.

In addition, modifying or even eliminating Section 230 wouldn't stop bad actors from spreading harmful content, as they are adept at exploiting loopholes and adapting to new platforms. A much better approach lies in addressing the behavior of the bad actors themselves, not transferring the responsibility onto Internet platform administrators. The issues that people seek to solve by modifying Section 230 simply would not be improved by this legislation.

Impact on Competition

The current protections encourage innovation and allow new entrants to compete in the Internet services space. Without these protections, smaller companies and startups would face significant barriers to entry due to the threat of costly litigation and the need to support large staff of content moderators. This could lead to an even greater consolidation of power among a few large corporations, reducing competition and limiting consumer choice. Furthermore, these same increased operational costs could stifle innovation and slow the development of new technologies.

As someone who has been deeply involved in the growth and evolution of Internet technologies, I believe that maintaining the integrity of Section 230 is essential for fostering a vibrant, competitive, and open Internet. I urge you to consider the potential ramifications of modifying this critical piece of legislation and to oppose any efforts that would undermine its foundational principles.

Thank you for your attention to this important matter. I appreciate your service to our state and your consideration of my perspective. Please feel free to contact me if you wish to discuss this issue further.

Sincerely,

Barry Stahl

Software Engineer

Phoenix AZ

https://CognitiveInheritance.com

Tags: legislation net-neutrality ethics opinion social-media

Continuing a Conversation on LLMs

Posted by bsstahl on 2023-04-13 and Filed Under: tools

This post is the continuation of a conversation from Mastodon. The thread begins here.

Update: I recently tried to recreate the conversation from the above link and had to work far harder than I would wish to do so. As a result, I add the following GPT summary of the conversation. I have verified this summary and believe it to be an accurate, if oversimplified, representation of the thread.

The thread discusses the value and ethical implications of Language Learning Models (LLMs).

@arthurdoler@mastodon.sandwich.net criticizes the hype around LLMs, arguing that they are often used unethically, or suffer from the same bias and undersampling problems as previous machine learning models. He also questions the value they bring, suggesting they are merely language toys that can't create anything new but only reflect what already exists.

@bsstahl@CognitiveInheritance.com, however, sees potential in LLMs, stating that they can be used to build amazing things when used ethically. He gives an example of how even simple autocomplete tools can help generate new ideas. He also mentions how earlier LLMs like Word2Vec were able to find relationships that humans couldn't. He acknowledges the potential dangers of these tools in the wrong hands, but encourages not to dismiss them entirely.

@jeremybytes@mastodon.sandwich.net brings up concerns about the misuse of LLMs, citing examples of false accusations made by ChatGPT. He points out that people are treating the responses from these models as facts, which they are not designed to provide.

@bsstahl@CognitiveInheritance.com agrees that misuse is a problem but insists that these tools have value and should be used for legitimate purposes. He argues that if ethical developers don't use these tools, they will be left to those who misuse them.

I understand and share your concerns about biased training data in language models like GPT. Bias in these models exists and is a real problem, one I've written about in the past. That post enumerates my belief that it is our responsibility as technologists to understand and work around these biases. I believe we agree in this area. I also suspect that we agree that the loud voices with something to sell are to be ignored, regardless of what they are selling. I hope we also agree that the opinions of these people should not bias our opinions in any direction. That is, just because they are saying it, doesn't make it true or false. They should be ignored, with no attention paid to them whatsoever regarding the truth of any general proposition.

Where we clearly disagree is this: all of these technologies can help create real value for ourselves, our users, and our society.

In some cases, like with crypto currencies, that value may never be realized because the scale that is needed to be successful with it is only available to those who have already proven their desire to fleece the rest of us, and because there is no reasonable way to tell the scammers from legit-minded individuals when new products are released. There is also no mechanism to prevent a takeover of such a system by those with malicious intent. This is unfortunate, but it is the state of our very broken system.

This is not the case with LLMs, and since we both understand that these models are just a very advanced version of autocomplete, we have at least part of the understanding needed to use them effectively. It seems however we disagree on what that fact (that it is an advanced autocomplete) means. It seems to me that LLMs produce derivative works in the same sense (not method) that our brains do. We, as humans, do not synthesize ideas from nothing, we build on our combined knowledge and experience, sometimes creating things heretofore unseen in that context, but always creating derivatives based on what came before.

Word2Vec uses a 60-dimensional vector store. GPT-4 embeddings have 1536 dimensions. I certainly cannot consciously think in that number of dimensions. It is plausible that my subconscious can, but that line of thinking leads to the the consideration of the nature of consciousness itself, which is not a topic I am capable of debating, and somewhat ancillary to the point, which is: these tools have value when used properly and we are the ones who can use them in valid and valuable ways.

The important thing is to not listen to the loud voices. Don't even listen to me. Look at the tools and decide for yourself where you find value, if any. I suggest starting with something relatively simple, and working from there. For example, I used Bing chat during the course of this conversation to help me figure out the right words to use. I typed in a natural-language description of the word I needed, which the LLM translated into a set of possible intents. Bing then used those intents to search the internet and return results. It then used GPT to summarize those results into a short, easy to digest answer along with reference links to the source materials. I find this valuable, I think you would too. Could I have done something similar with a thesaurus, sure. Would it have taken longer: probably. Would it have resulted in the same answer: maybe. It was valuable to me to be able to describe what I needed, and then fine-tune the results, sometimes playing-off of what was returned from the earlier requests. In that way, I would call the tool a force-multiplier.

Yesterday, I described a fairly complex set of things I care to read about when I read social media posts, then asked the model to evaluate a bunch of posts and tell me whether I might care about each of those posts or not. I threw a bunch of real posts at it, including many where I was trying to trick it (those that came up in typical searches but I didn't really care about, as well as the converse). It "understood" the context (probably due to the number of dimensions in the model and the relationships therein) and labeled every one correctly. I can now use an automated version of this prompt to filter the vast swaths of social media posts down to those I might care about. I could then also ask the model to give me a summary of those posts, and potentially try to synthesize new information from them. I would not make any decisions based on that summary or synthesis without first verifying the original source materials, and without reasoning on it myself, and I would not ever take any action that impacts human beings based on those results. Doing so would be using these tools outside of their sphere of capabilities. I can however use that summary to identify places for me to drill-in and continue my evaluation, and I believe, can use them in certain circumstances to derive new ideas. This is valuable to me.

So then, what should we build to leverage the capabilities of these tools to the benefit of our users, without harming other users or society? It is my opinion that, even if these tools only make it easier for us to allow our users to interact with our software in more natural ways, that is, in itself a win. These models represent a higher-level of abstraction to our programming. It is a more declarative mechanism for user interaction. With any increase in abstraction there always comes an increase in danger. As technologists it is our responsibility to understand those dangers to the best of our abilities and work accordingly. I believe we should not be dismissing tools just because they can be abused, and there is no doubt that some certainly will abuse them. We need to do what's right, and that may very well involve making sure these tools are used in ways that are for the benefit of the users, not their detriment.

Let me say it this way: If the only choices people have are to use tools created by those with questionable intent, or to not use these tools at all, many people will choose the easy path, the one that gives them some short-term value regardless of the societal impact. If we can create value for those people without malicious intent, then the users have a choice, and will often choose those things that don't harm society. It is up to us to make sure that choice exists.

I accept that you may disagree. You know that I, and all of our shared circle to the best of my knowledge, find your opinion thoughtful and valuable on many things. That doesn't mean we have to agree on everything. However, I hope that disagreement is based on far more than just the mistrust of screaming hyperbolists, and a misunderstanding of what it means to be a "overgrown autocomplete".

To be clear here, it is possible that it is I who is misunderstanding these capabilities. Obviously, I don't believe that to be the case but it is always a possibility, especially as I am not an expert in the field. Since I find the example you gave about replacing words in a Shakespearean poem to be a very obvious (to me) false analog, it is clear that at lease one of us, perhaps both of us, are misunderstanding its capabilities.

I still think it would be worth your time, and a benefit to society, if people who care about the proper use of these tools, would consider how they could be used to society's benefit rather than allowing the only use to be by those who care only about extracting value from users. You have already admitted there are at least "one and a half valid use cases for LLMs". I'm guessing you would accept then that there are probably more you haven't seen yet. Knowing that, isn't it our responsibility as technologists to find those uses and work toward creating the better society we seek, rather than just allowing extremists to use it to our detriment.

Update: I realize I never addressed the issue of the models being trained on licensed works.

Unless a model builder has permission from a user to train their models using that user's works, be it an OSS or Copyleft license, explicit license agreement, or direct permission, those items should not be used to train models. If it is shown that a model has been trained using such data sets, and there have been indications (unproven as yet to my knowledge) that this may be the case for some models, especially image-generators, then that is a problem with those models that needs to be addressed. It does not invalidate the general use of these models, nor is it an indictment of any person or model except those in violation. Our trademark and copyright systems are another place where we, as a society, have completely fallen-down. Hopefully, that collapse will not cause us to forsake the value that these tools can provide.

Tags: coding-practices development enterprise responsibility testing ai algorithms ethics mastodon

Beta Tools and Wait-Lists

Posted by bsstahl on 2023-04-12 and Filed Under: tools

Here's a problem I am clearly privileged to have. I'll be working on a project and run into a problem. I search the Internet for ways to solve that problem and find a beta product that looks like a very interesting, innovative way to solve that problem. So, I sign up for the beta and end up getting put on a waitlist. This doesn't help me, at least not right now. So, I go off and find another way to solve my problem and continue doing what I'm doing and forget all about the beta program that I signed up for.

Then, at some point, I get an email from them saying congratulations you've been accepted to our beta program. Well, guess what? I don't even remember who you are or what problem I was trying to solve anymore or even if I actually even signed up for it. In fact, most of the time that I get emails like that, I just assume that it is another spam email.

I understand there are valid reasons for sometimes putting customers on wait-lists. I also understand that sometimes companies just try to create artificial scarcity so that their product takes on a cool factor. Please know that, if this is what you're doing, you're likely losing as many customers as you would gain if not more, and may be putting your very existence at risk.

I wonder how many cool products I've missed out on because of that delay in getting access? I wonder how many cool products just died because they weren't there for people when they actually needed them?

Tags: ethics

Like a River

Posted by bsstahl on 2023-02-06 and Filed Under: development

We all understand to some degree, that the metaphor comparing the design and construction of software to that of a building is flawed at best. That isn't to say it's useless of course, but it seems to fail in at least one critical way; it doesn't take into account that creating software should be solving a business problem that has never been solved before. Sure, there are patterns and tools that can help us with technical problems similar to those that have been solved in the past, but we should not be solving the same business problem over and over again. If we are, we are doing something very wrong. Since our software cannot simply follow long-established plans and procedures, and can evolve very rapidly, even during construction, the over-simplification of our processes by excluding the innovation and problem-solving aspects of our craft, feels rather dangerous.

Like Constructing a Building

It seems to me that by making the comparison to building construction, we are over-emphasizing the scientific aspects of software engineering, and under-emphasizing the artistic ones. That is, we don't put nearly enough value on innovation such as designing abstractions for testability and extensibility. We also don't emphasize enough the need to understand the distinct challenges of our particular problem domain, and how the solution to a similar problem in a different domain may focus on the wrong features of the problem. As an example, let's take a workforce scheduling tool. The process of scheduling baristas at a neighborhood coffee shop is fundamentally similar to one scheduling pilots to fly for a small commercial airline. However, I probably don't have to work too hard to convince you that the two problems have very different characteristics when it comes to determining the best solutions. In this case, the distinctions are fairly obvious, but in many cases they are not.

Where the architecture metaphor makes the most sense to me is in the user-facing aspects of both constructions. The physical aesthetics, as well as the experience humans have in their interactions with the features of the design are critical in both scenarios, and in both cases will cause real problems if ignored or added as an afterthought. Perhaps this is why the architecture metaphor has become so prevalent in that it is easy to see the similarities between the aesthetics and user-experience of buildings and software, even for a non-technical audience. However, most software built today has a much cleaner separation of concerns than software built when this metaphor was becoming popular in the 1960s and 70s, rendering it mostly obsolete for the vast majority of our systems and sub-systems.

When we consider more technical challenges such as design for reliability and resiliency, the construction metaphor fails almost completely. Reliability is far more important in the creation of buildings than it is in most software projects, and often very different. While it is never ok for the structure of a building to fail, it can be perfectly fine, and even expected, for most aspects of a software system to fail occasionally, as long as those failures are well-handled. Designing these mechanisms is a much more flexible and creative process in building software, and requires a large degree of innovation to solve these problems in ways that work for each different problem domain. Even though the two problems can share the same name in software and building construction, and have some similar characteristics, they are ultimately very different problems and should be seen as such. The key metaphors we use to describe our tasks should reflect these differences.

Like a River

For more than a decade now, I've been fascinated by Grady Booch's suggestion that a more apt metaphor for the structure and evolution of the software within an enterprise is that of a river and its surrounding ecosystem G. Booch, "Like a River" in IEEE Software, vol. 26, no. 03, pp. 10-11, 2009. In this abstraction, bank-to-bank slices represent the current state of our systems, while upstream-downstream sections represent changes over time. The width and depth of the river represent the breadth and depth of the structures involved, while the speed of the water, and the differences in speed between the surface (UI) and depths (back-end) represent the speed of changes within those sub-systems.

The life cycle of a software-intensive system is like a river, and we, as developers, are but captains of the boats that ply its waters and dredge its channels. - Grady Booch

I will not go into more detail on Booch's analogy, since it will be far better to read it for yourself, or hear it in his own voice. I will however point out that, in his model, Software Engineers are "…captains of the boats that ply the waters and dredge the channels". It is in this context, that I find the river metaphor most satisfying.

As engineers, we:

- Navigate and direct the flow of software development, just as captains steer their boats ina particular direction.

- Make decisions and take action to keep the development process moving forward, similar to how captains navigate their boats through obstacles and challenges.

- Maintain a highly-functional anomaly detection and early-warning system to alert us of upcoming obstacles such as attacks and system outages, similar to the way captains use sonar to detect underwater obstacles and inspections by their crew, to give them useful warnings.

- Use ingenuity and skill, while falling back on first-principles, to know when to add abstractions or try something new, in the same way that captains follow the rules of seamanship, but know when to take evasive or unusual action to protect their charge.

- Maintain a good understanding of the individual components of the software, as well as the broader architecture and how each component fits within the system, just as captains need to know both the river and its channels, and the details of the boat on which they travel.

- Are responsible for ensuring the software is delivered on time and within budget, similar to how captains ensure their boats reach their destination on schedule.

- May be acting on but one small section at a time of the broader ecosystem. That is, an engineer may be working on a single feature, and make decisions on how that element is implemented, while other engineers act similarly on other features. This is akin to the way many captains may navigate the same waters simultaneously on different ships, and must make decisions that take into account the presence, activities and needs of the others.

This metaphor, in my opinion, does a much better job of identifying the critical nature of the software developer in the design of our software than then that of the creation of a building structure. It states that our developers are not merely building walls, but they are piloting ships, often through difficult waters that have never previously been charted. These are not laborers, but knowledge-workers whose skills and expertise need to be valued and depended on.

Unfortunately this metaphor, like all others, is imperfect. There are a number of elements of software engineering where no reasonable analog exists into the world of a riverboat captain. One example is the practice of pair or mob programming. I don't recall ever hearing of any instances where a pair or group of ships captains worked collaboratively, and on equal footing, to operate a single ship. Likewise, the converse is also true. I know of no circumstances in software engineering where split-second decisions can have life-or-death consequences. That said, I think the captain metaphor does a far better job of describing the skill and ingenuity required to be a software engineer than that of building construction.

To be very clear, I am not saying that the role of a construction architect, or even construction worker, doesn't require skill and ingenuity, quite the contrary. I am suggesting that the types of skills and the manner of ingenuity required to construct a building, doesn't translate well in metaphor to that required of a software engineer, especially to those who are likely to be unskilled in both areas. It is often these very people, our management and leadership, whom these metaphors are intended to inform. Thus, the construction metaphor represents the job of a software developer ineffectively.

Conclusion

The comparisons of creating software to creating an edifice is not going away any time soon. Regardless of its efficacy, this model has come to be part of our corporate lexicon and will likely remain so for the foreseeable future. Even the title of "Software Architect" is extremely prevalent in our culture, a title which I have held, and a role that I have enjoyed for many years now. That said, it could only benefit our craft to make more clear the ways in which that metaphor fails. This clarity would benefit not just the non-technical among us who have little basis to judge our actions aside from these metaphors, but also us as engineers. It is far too easy for anyone to start to view developers as mere bricklayers, rather than the ships captains we are. This is especially true when generations of engineers have been brought up on and trained on the architecture metaphor. If they think of themselves as just workers of limited, albeit currently valuable skill, it will make it much harder for them to challenge those things in our culture that need to be challenged, and to prevent the use of our technologies for nefarious purposes.

Tags: architecture corporate culture enterprise ethics opinion

Teach Students how to Use ChatGPT

Posted by bsstahl on 2022-12-17 and Filed Under: tools

There have been a number of concerns raised, with clearly more to come, about the use of ChatGPT and similar tools in academic circles. I am not an academic, but I am a professional and I believe these concerns to be misplaced.

As a professional in my field, I should and do use tools like ChatGPT to do my job.

I, and the teams I work with, experiment with ways to use tools like ChatGPT better. We use these tools to create the foundation for our written work. We use them to automate the mundane stuff. We use them as thinking tools, to prompt us with ideas we might not have considered. This is not only allowed, it is encouraged!

Why should it be different for students?

There are several good analogs for ChatGPT that we all have used for years, these include:

The predictive text on our mobile phones - It is the same as pressing the middle word on the virtual keyboard to autocomplete a sentence. That is all this tool does, predict what is the most likely next word based on the inputs.

The template in your chosen word processing software (i.e. MS Word or Google Docs) - Both will create a framework for you where you fill in the details. This is really all that ChatGPT does, it just does it in a more visually impressive way.

Grammar and Thesaurus Software - "Suggests" words that can be modified to make the meaning clearer or the language more traditionally appropriate.

Wikipedia, or other information aggregator - A source of text that can be used as a starting point for research, or a source of plagiarized material, at the discretion of the user.

Nobody thinks twice about using any of these tools anymore, though there was certainly concern early-on about Wikipedia. This is probably due to reasons like these:

If anyone, student or professional, produced a work product that was just an unmodified template, it would considered very sloppy and incomplete work, and would be judged as such on its merits.

If anyone, student or professional, produced a work product that was copied from Wikipedia or other source, without significant modification or citation, there would be clear evidence of that fact available via the Internet.

ChatGPT is concerning to academics because it has become good enough at doing the work of these template and predictive tools to pass a higher standard of review, and its use cannot be proven, only given a probability score. However, like all tools, the key is not that it is used, but how it is used.

The text that ChatGPT produces is generated probabilistically. It is not enough just to have it spit out a template and submit it as work product. Its facts need to be verified (and are often wrong). Its "analysis" needs to be tested and verified. Its "writing" needs to be clarified and organized. When you submit work where ChatGPT was used to automate the mundane task of generating the basic layout, you are saying that you have verified the text and that you stand behind it. It is your work and you are approving it. If it has lied, you have lied. If the words it spit-out result in a bad analysis, it is your bad analysis. The words are yours when you submit them regardless of whether they were generated via the neural network of your brain, the artificial neural network of ChatGPT, or some other, perhaps procedural method.

I'll say it clearly for emphasis:

All work should be judged on its merits

Educators should teach how to use these tools responsibly and safely

Academics and professionals alike, please do not attempt to legislate the use of these tools. Instead, focus on how they should be used. Teach ethical and safe usage of these tools in a similar way to how we teach students to use Wikipedia. These productivity aids are not going away, they are only going to get better. We need to show everyone how to use them to their advantage, and to the advantage of their teams and of society.

My field of Software Engineering is primarily about solving problems. To solve problems, we describe solutions to these problems in ways that are easy for a machine to interpret. The only difference between the code I write that goes into a compiler to be turned into machine-executable instructions, and the code I write to go into ChatGPT is the language that I use to describe my intent. Using ChatGPT is just writing a computer program using the English language rather than C# or Python. A process such as that should absolutely be encouraged whether the usage is academic or not.

It is my firm belief that the handwringing about the productivity gains that a fantastic tool like ChatGPT can give us is not only misplaced, it is often dangerously misleading.

Addendum

I am only now realizing I should have used ChatGPT to produce the foundations of this text. A missed opportunity to be certain, though to be fair, I originally intended this to be a one or two liner, not an essay.

Disclosure

I have no stake whatsoever in ChatGPT except as a beta user.

Tags: ai ethics chatgpt

Programmers -- Take Responsibility for Your AI’s Output

Posted by bsstahl on 2018-03-16 and Filed Under: development

plus ça change, plus c'est la même chose – The more that things change, the more they stay the same. – Rush (and others

)

In 2013 I wrote that programmers needed to take responsibility for the output of their computer programs. In that article, I advised developers that the output of their system, no matter how “random” or “computer generated”, was still their responsibility. I suggested that we cannot cop out by claiming that the output of our programs is not our fault simply because we didn’t directly instruct the computer to issue that specific result.

Today, we have a similar problem, only the stakes are much, much, higher.

In the world of 2018, our algorithms are being used in police work and inside other government agencies to know where and when to deploy resources, and to decide who is and isn’t worthy of an opportunity. Our programs are being used in the private sector to make decisions from trading stocks to hiring, sometimes at a scale and speed that puts us all at risk of economic events. These tools are being deployed by information brokers such as Facebook and Google to make predictions about how best to steal the most precious resource we have, our time. Perhaps scariest of all, these algorithms may be being used to make decisions that have permanent and irreversible results, such as with drone strikes. We simply have no way of knowing the full breadth of decisions that AIs are making on our behalf today. If those algorithms are biased in any way, the decisions made by these programs will be biased, potentially in very serious ways and with serious results.

If we take all available steps to recognize and eliminate the biases in our systems, we can minimize the likelihood of our tools producing output that we did not expect or that violates our principles.

All of the machines used to execute these algorithms are bias-free of course. A computer has no prejudices and no desires of its own. However, as we all know, decision-making tools learn what we teach them. We cannot completely teach these algorithms free of our own biases. It simply cannot be done since all of our data is colored by our existing biases. Perhaps the best known example of bias in our data is in crime data used for policing. If we send police to where there is most often crime, we will be sending them to the same places we’ve sent them in the past, since generally, crime involves having a police office in the location to make an arrest. Thus, any biases we may have had in the past about where to send police officers, will be represented in our data sets about crime.

While we may never be able to eliminate biases completely, there are things that we can do to minimize the impact of the biases we are training into our algorithms. If we take all available steps to recognize and eliminate the biases in our systems, we can minimize the likelihood of our tools producing output that we did not expect or that violates our principles.

Know that the algorithm is biased

We need to accept the fact that there is no way to create a completely bias-free algorithm. Any dataset we provide to our tools will inherently have some bias in it. This is the nature of our world. We create our datasets based on history and our history, intentionally or not, is full of bias. All of our perceptions and understandings are colored by our cognitive biases, and the same is true for the data we create as a result of our actions. By knowing and accepting this fact, that our data is biased, and therefore our algorithms are biased, we take the first step toward neutralizing the impacts of those biases.

Predict the possible biases

We should do everything we can to predict what biases may have crept into our data and how they may impact the decisions the model is making, even if that bias is purely theoretical. By considering what biases could potentially exist, we can watch for the results of those biases, both in an automated and manual fashion.

Train “fairness” into the model

If a bias is known to be present in the data, or even likely to be present, it can be accounted for by defining what an unbiased outcome might look like and making that a training feature of the algorithm. If we can reasonably assume that an unbiased algorithm would distribute opportunities among male and female candidates at the same rate as they apply for the opportunity, then we can constrain the model with the expectation that the rate of accepted male candidates should be within a statistical tolerance of the rate of male applicants. That is, if half of the applicants are men then men should receive roughly half of the opportunities. Of course, it will not be nearly this simple to define fairness for most algorithms, however every effort should be made.

Be Open About What You’ve Built

The more people understand how you’ve examined your data, and the assumptions you’ve made, the more confident they can be that anomalies in the output are not a result of systemic bias. This is the most critical when these decisions have significant consequences to peoples’ lives. A good example is in prison sentencing. It is unconscionable to me that we allow black-box algorithms to make sentencing decisions on our behalf. These models should be completely transparent and subject to our analysis and correction. That they aren’t, but are still being used by our governments, represent a huge breakdown of the system, since these decisions MUST be made with the trust and at the will of the populace.

Build AIs that Provide Insight Into Results (when possible)

Many types of AI models are completely opaque when it comes to how decisions are reached. This doesn’t mean however that all of our AIs must be complete black-boxes. It is true that most of the common machine learning methods such as Deep-Neural-Networks (DNNs) are extremely difficult to analyze. However, there are other types of models that are much more transparent when it comes to decision making. Some model types will not be useable on all problems, but when the options exist, transparency should be a strong consideration.

There are also techniques that can be used to make even opaque models more transparent. For example, a hybrid technique (AI That Can Explain Why & An Example of a Hybrid AI Implementation) can be used to run opaque models iteratively. This can allow the developer to log key details at specific points in the process, making the decisions much more transparent. There are also techniques to manipulate the data after a decision is made, to gain insight into the reasons for the decision.

Don’t Give the AI the Codes to the Nukes

Computers should never be allowed to make automated decisions that cannot be reversed by a human if necessary. Decisions like when to attack a target, execute a criminal, vent radioactive waste, or ditch an aircraft are all decisions that require human verification since they cannot be undone if the model has an error or is faced with a completely unforeseen set of conditions. There are no circumstances where machines should be making such decisions for us without the opportunity for human intervention, and it is up to us, the programmers, to make sure that we don’t give them that capability.

Don’t Build it if it Can’t be Done Ethically

If we are unable to come up with an algorithm that is free from bias, perhaps the situation is not appropriate for an automated decision making process. Not every situation will warrant an AI solution, and it is very likely that there are decisions that should always be made by a human in totality. For those situations, a decision support system may be a better solution.

The Burden is Ours

As the creators of automated decision making systems, we have the responsibility to make sure that the decisions they make do not violate our standards or ethics. We cannot depend on our AIs to make fair and reasonable decisions unless we program them to do so, and programming them to avoid inherent biases requires an awareness and openness that has not always been present. By taking the steps outlined here to be aware of the dangers and to mitigate it wherever possible, we have a chance of making decisions that we can all be proud of, and have confidence in.